/Micron%20Technology%20Inc_billboard-by%20Poetra_RH%20via%20Shutterstock.jpg)

In the fierce race to power artificial intelligence (AI), the spotlight has long shone on graphics processing units (GPUs) from leaders like Nvidia (NVDA). Yet, beneath the surface, a quieter revolution is unfolding in the memory chips that make those advanced processors truly hum. Micron Technology (MU) is emerging as the dark-horse contender poised to claim the crown as the best breakout growth story in the AI semiconductor space.

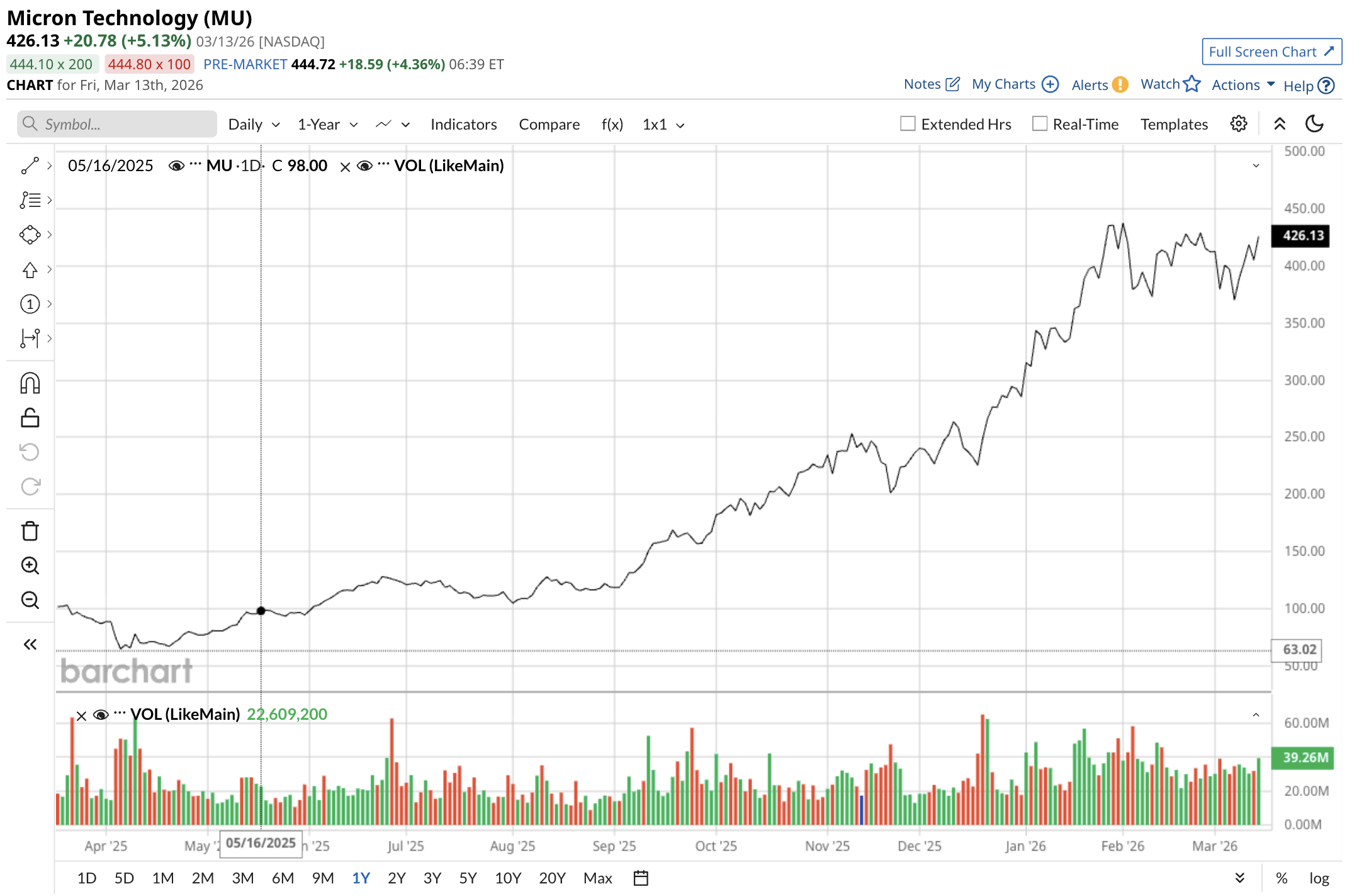

While competitors chase headlines with flashy chip designs, Micron’s focus on high-performance DRAM and high-bandwidth memory (HBM) positions it at the heart of an insatiable demand cycle that shows no signs of slowing. With MU stock up 61% year-to-date (YTD), Micron is showing no sign of slowing, either.

AI Has a Voracious Appetite

The AI boom’s true bottleneck isn’t raw compute power — it’s the lightning-fast memory required to feed data-hungry models. Every leap in Nvidia's architecture, from the H100 to the upcoming Rubin platform, demands exponentially more DRAM per chip. Where earlier generations needed roughly 80 gigabytes, Rubin chips are projected to consume around 300 gigabytes or more to train, infer, and reason at scale. This surge has turned memory into the strategic choke point for data-center operators worldwide.

As long as Nvidia's advanced AI accelerators remain white-hot — and every indicator suggests they will for years — Micron’s DRAM supply chain sits at the epicenter of unlimited expansion opportunities.

Demand for leading-edge DRAM and HBM has already outstripped industry capacity, with Micron’s production lines fully allocated through 2026. The company’s role as one of the few U.S.-based suppliers of these critical components adds geopolitical resilience, allowing the firm to capture share as hyperscalers diversify away from dominant Asia-based players.

Partnerships with Nvidia have accelerated qualification of Micron’s HBM3e and next-generation HBM4 solutions, locking in multi-year revenue visibility. This isn’t a fleeting spike — it’s the foundation of a multi-hundred-billion-dollar AI memory market that Micron is uniquely equipped to serve across data centers, edge computing, and even automotive applications.

Micron Is Ready to Roar Higher

On March 15, Micron delivered a powerful signal of its commitment to meeting this explosion in demand. The company completed its acquisition of Powerchip Semiconductor Manufacturing's Tongluo P5 site in Taiwan. The deal hands Micron roughly 300,000 square feet of ready-to-use 300-millimeter cleanroom space, directly earmarked for ramping up advanced DRAM output — including the high-bandwidth variants essential for AI workloads.

Plans for a second full-scale fabrication facility on the same campus are already underway, effectively doubling capacity at the location. This strategic move instantly bolsters Micron’s ability to scale production without the multi-year delays typical of greenfield builds, ensuring it can keep pace with Nvidia’s relentless roadmap.

All Eyes Are on March 18

With earnings scheduled for release after the market closes on March 18, investors are bracing for fresh evidence of this momentum. Analysts widely expect the fiscal second-quarter report to underscore accelerating revenue from AI-driven memory sales, margin expansion from sold-out capacity, and upward revisions to full-year guidance. The timing could not be more opportune. As hyperscalers race to deploy next-gen AI infrastructure, Micron’s DRAM shipments are set to become the invisible enabler behind every major deployment.

Beyond the immediate catalysts, Micron’s broader portfolio provides ballast. While AI commands the growth narrative, steady demand from traditional servers, consumer electronics, and industrial segments offers diversification. But it's the AI tailwind — tied inextricably to Nvidia's dominance — that unlocks the most compelling upside. So long as cutting-edge GPUs continue devouring vast quantities of high-speed memory, Micron’s production ramps and technological edge translate into compounding returns that can dwarf those of more visible chip names.

The Bottom Line on Micron Stock

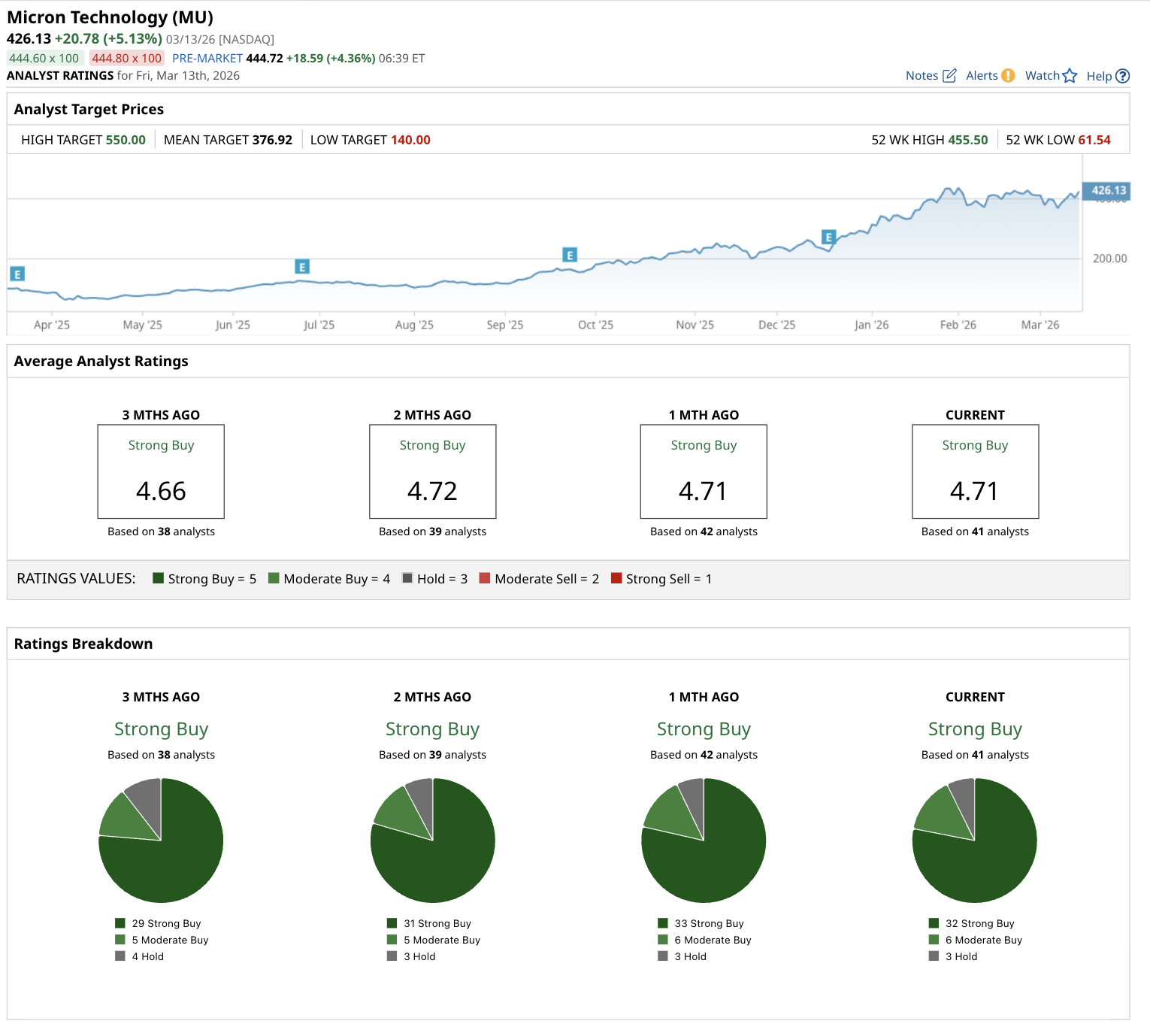

Micron stands out as one of the most cheaply valued AI chip stocks on the market today. Trading at a forward price-to-earnings (P/E) multiple of 12.3 times — remarkably modest, especially relative to peers — the company boasts a PEG ratio well below 1.0. Analyst projections call for earnings growth of 361% in fiscal 2026 followed by 53% growth in fiscal 2027.

This combination of earnings momentum and discounted valuation creates the setup for potentially explosive share-price appreciation as the market fully prices in Micron’s role in the AI memory supercycle. For investors who recognize that memory is the new oil of artificial intelligence, the opportunity at current levels could prove transformative.

On the date of publication, Rich Duprey did not have (either directly or indirectly) positions in any of the securities mentioned in this article. All information and data in this article is solely for informational purposes. For more information please view the Barchart Disclosure Policy here.

/Oracle%20Corp_%20office%20logo-by%20Mesut%20Dogan%20via%20iStock.jpg)